研究開発室の馮 志聖(マイク)です。

Content

- Introduction

- Remote control on ROS(Robot Operating System)

- Conclusion

- Future work

- Other

- Reference

Introduction

With the rapid development of science and technology and the popularization of the Internet, people are pursuing a better quality of life, and relaxed and happy work has become the first choice. At the same time, many labor-intensive and overtime jobs have gradually appeared in the labor gap. To make up for the labor gap, it is an inevitable trend for robots to replace humans. In our daily life, we often benefit from services provided by robots, such as sweeping robots, restaurant front desk services, home delivery services, etc., Many products we use are also made by robots in factories. To facilitate production, robots have gradually become indispensable.

In recent years, due to the spread of the epidemic and for security reasons, most people choose to work at home. Some software and tools based on remote collaboration have gradually gained attention. Among them, video chat services, which can increase the chances of discussions, especially have grown dramatically. The fluency of meetings allows members to quickly reach a consensus. For this reason, remote work is becoming no longer a barrier, but remote collaboration also has many limitations. Many researchers and developers have discussed how to break through these limitations.

What is the correlation between the above two? For example, in the following two situations, remotely controlling the robot would be a better choice. First, there are some areas in the world that are hazardous to humans or living things. The remote control can allow robots to perform tasks in these dangerous areas. Second, some emergency tasks require the assistance of senior personnel from other countries. Based on the epidemic, time, and distance considerations, remote control robots can be used to perform tasks.

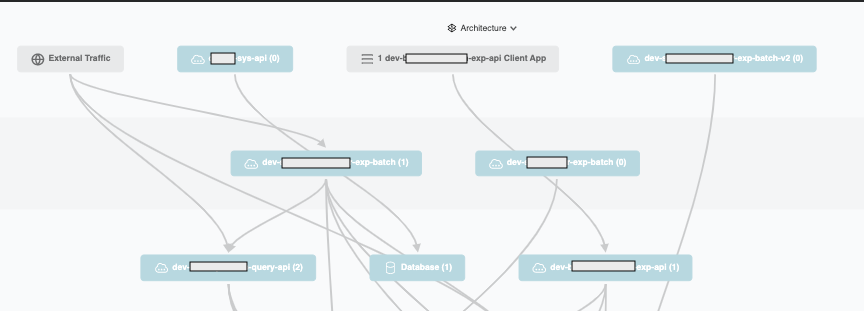

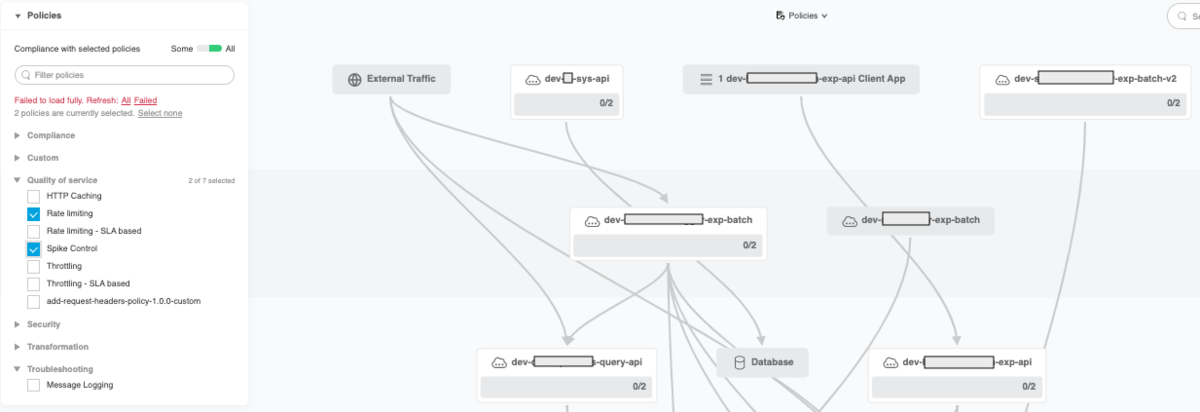

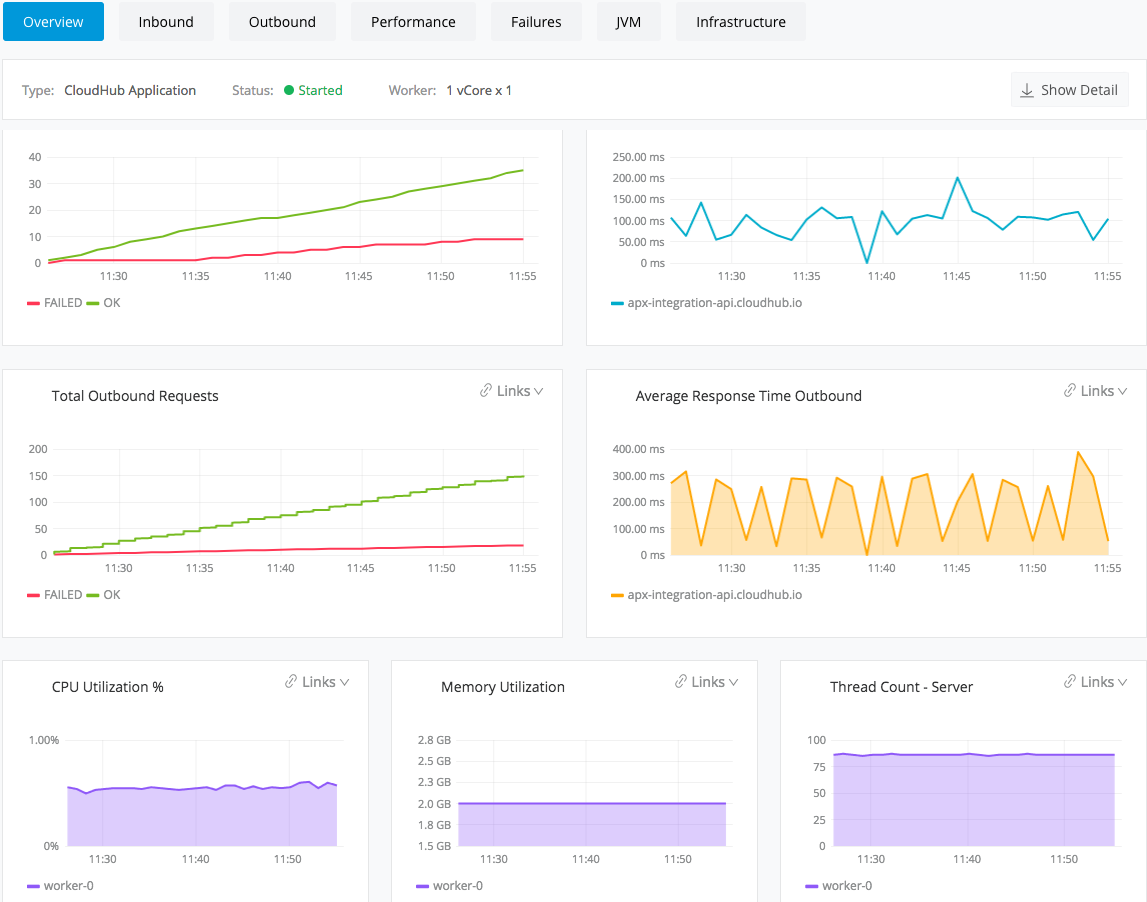

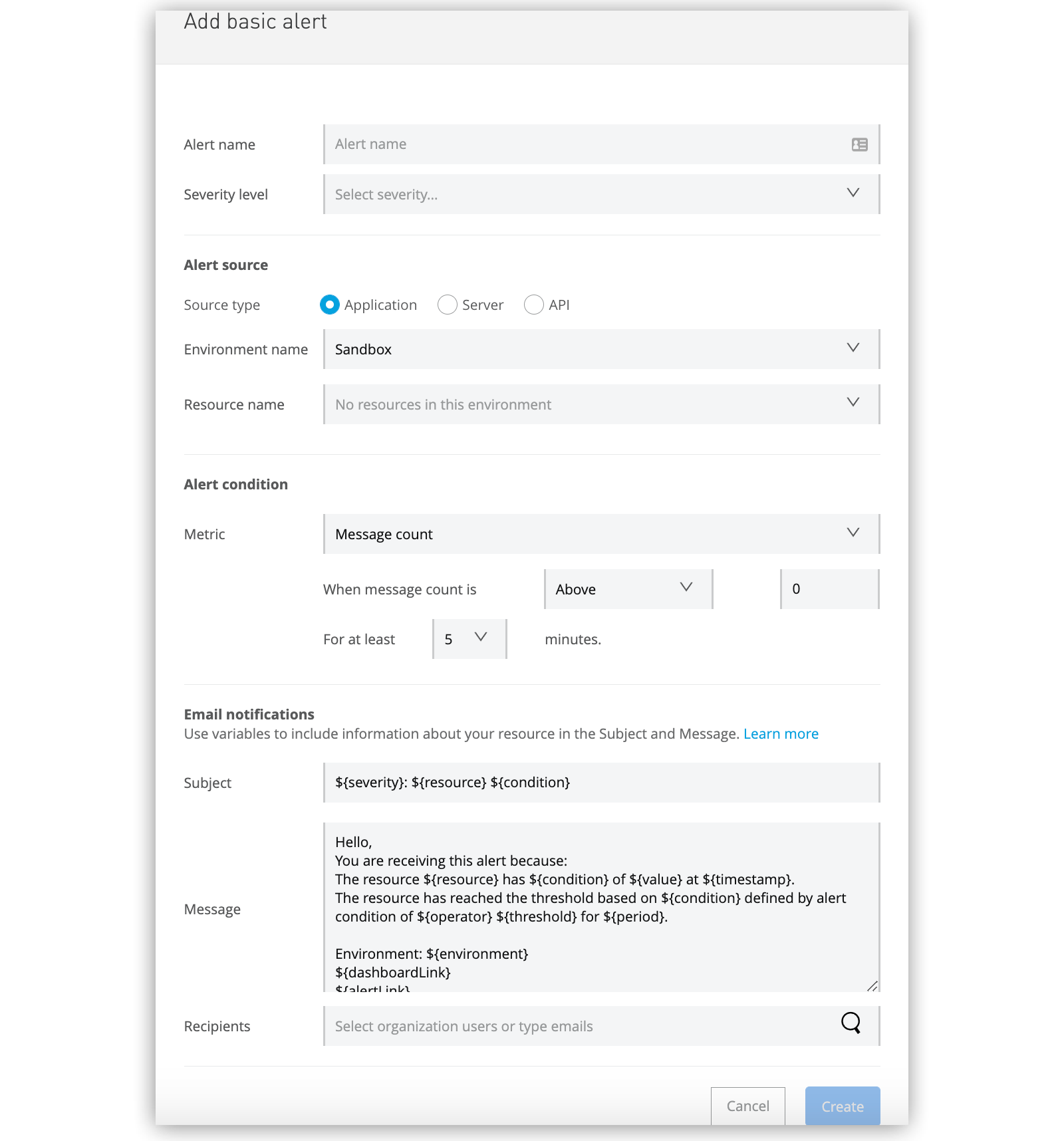

In this blog, we will discuss a fast and effective way to establish a simple interface operation and monitoring robot system, and verify the feasibility of remote execution of tasks.

続きを読む